Beyond the Loop

Human in the loop is a good place to start, but working with AI as a genuine partner — where roles stay fluid and context compounds over time — unlocks work you can't build alone.

Working with AI is messy on both sides — probabilistic outputs, answers that have to be argued with, judgment calls neither side could make alone. The disproportionate outcomes come from staying in the back-and-forth instead of trying to engineer the mess away.

Human in the loop is a good place to start, but working with AI as a genuine partner — where roles stay fluid and context compounds over time — unlocks work you can't build alone.

AI doesn't have to be something that happens to you. Personal use is where you build the relationship, the judgment, and the agency to shape how AI shows up in your work and life.

“When AI gives customers bad recommendations, who answers for it? Operational AI governance requires clear decision rights, outcome accountability, and context auditability.”

2026 is AI's reckoning year. LLM leaderboards miss the point — what matters is ROI, accountability, and designing human-AI workflows for real operational value.

AI pushes toward resolution even when questions deserve to stay open. Effective AI collaboration means learning to hold tension and resist premature answers on complex problems.

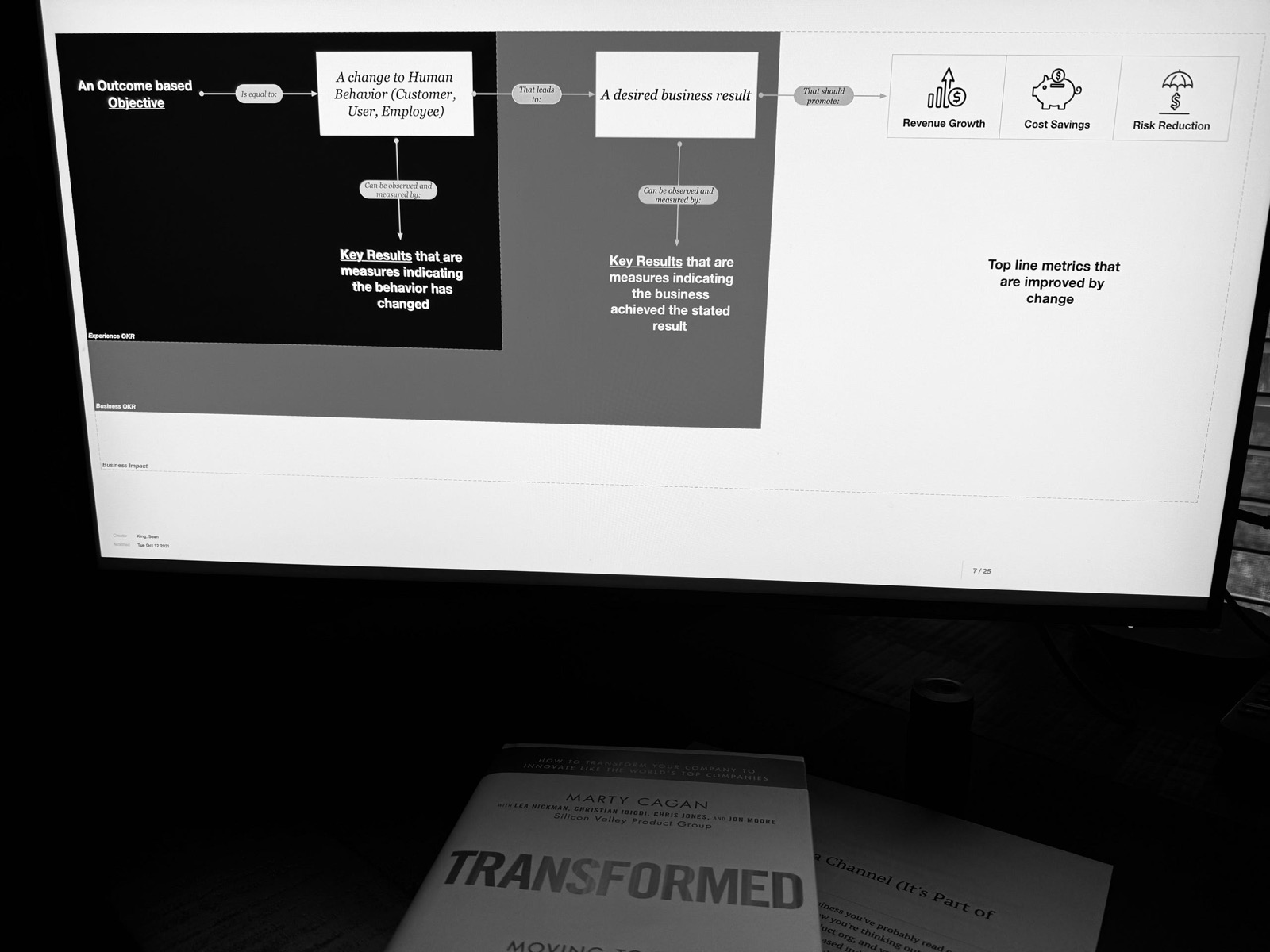

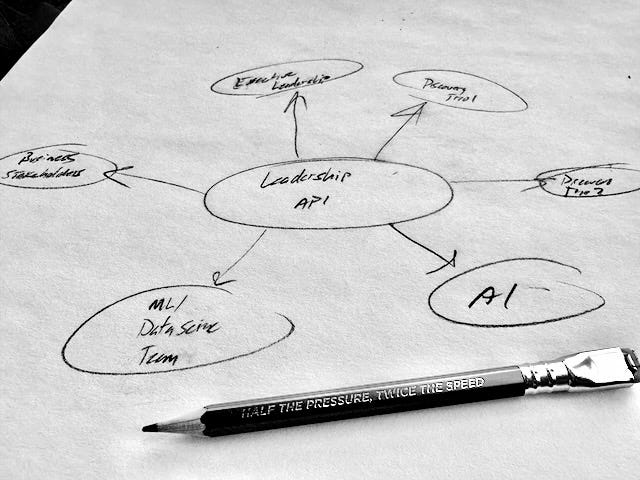

Combining the DARE framework with the Partnership Matrix to define clear decision roles in human-AI collaboration. Someone must always be the accountable Decider.

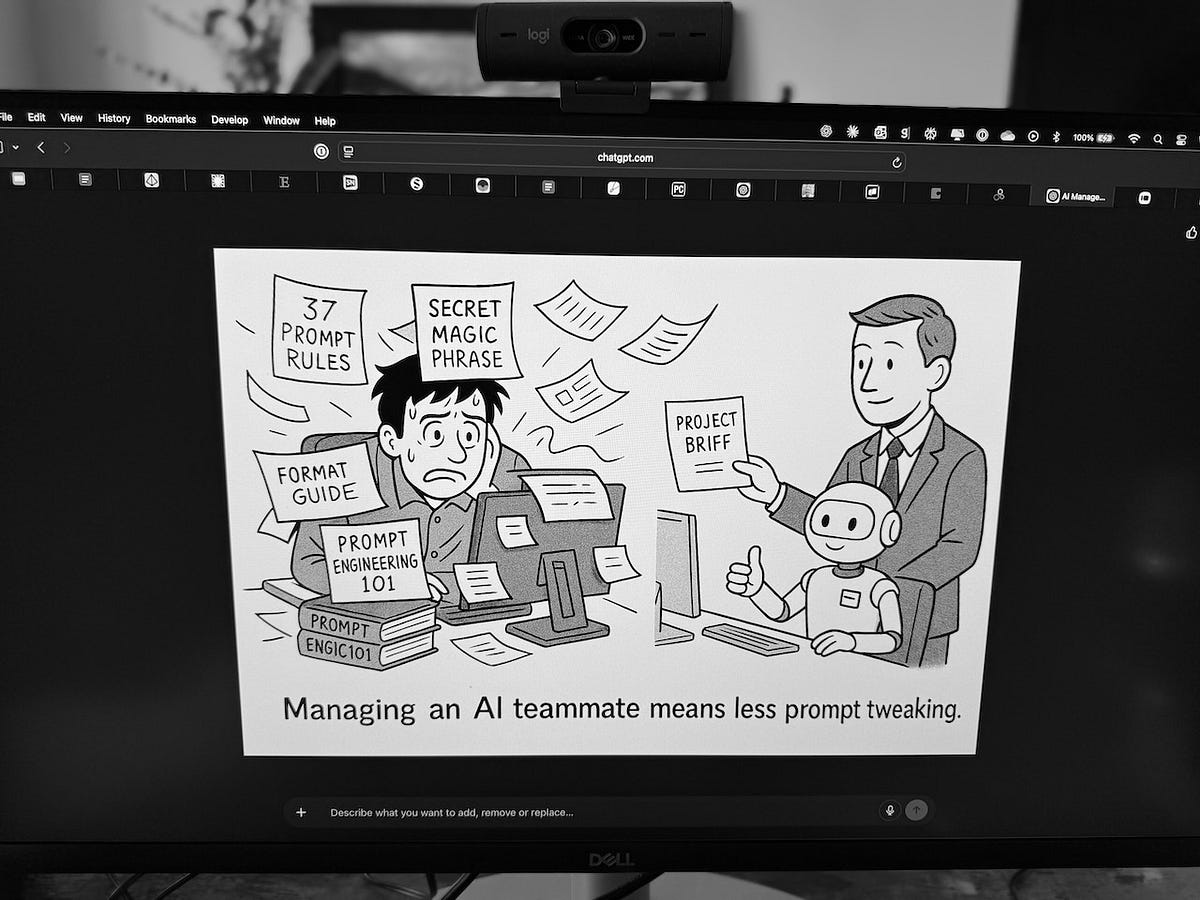

Forget prompt engineering frameworks. The management skills you already have — clear delegation, context-setting, verification habits — are what actually drive better AI results.

AI exhibits the behavioral patterns of psychopathy without the psychology. Understanding AI as alien pattern-matching, not human thinking, changes how we work with it.

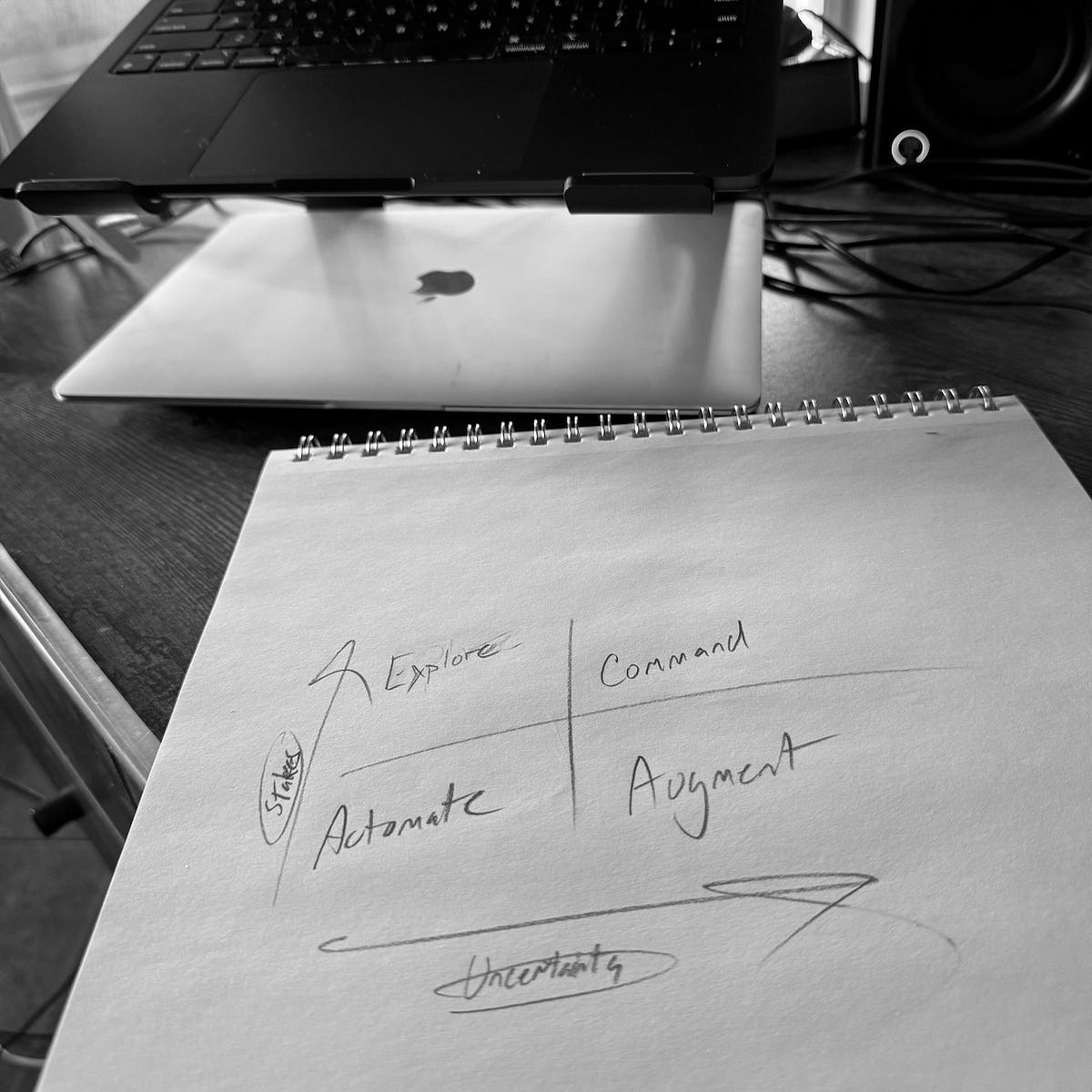

“A 2x2 framework mapping stakes and uncertainty to define how humans and AI should collaborate, from full automation to human-led command decisions.”

AI writing follows distinct patterns that mirror programming structures — loops, conditionals, variable assignments. Recognizing them is the first step to finding your own voice with AI.

Start using AI with low-stakes personal tasks to build intuition for what works. The skills you develop — context-setting, structured requests — transfer directly to professional use.

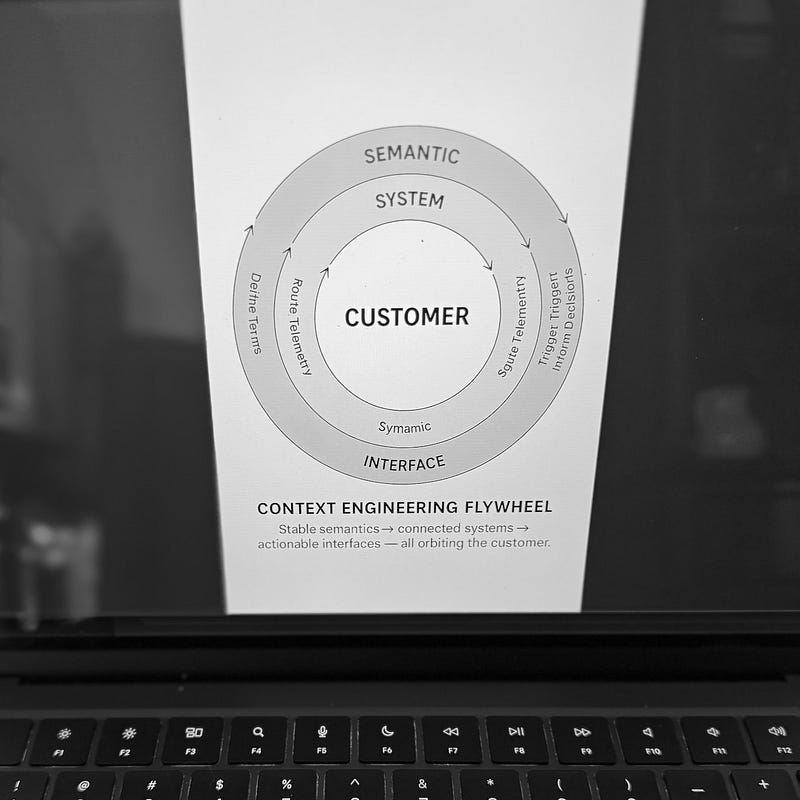

A year after coining context engineering, the idea evolved from a simple observation into infrastructure, tooling, and a knowledge management discipline.

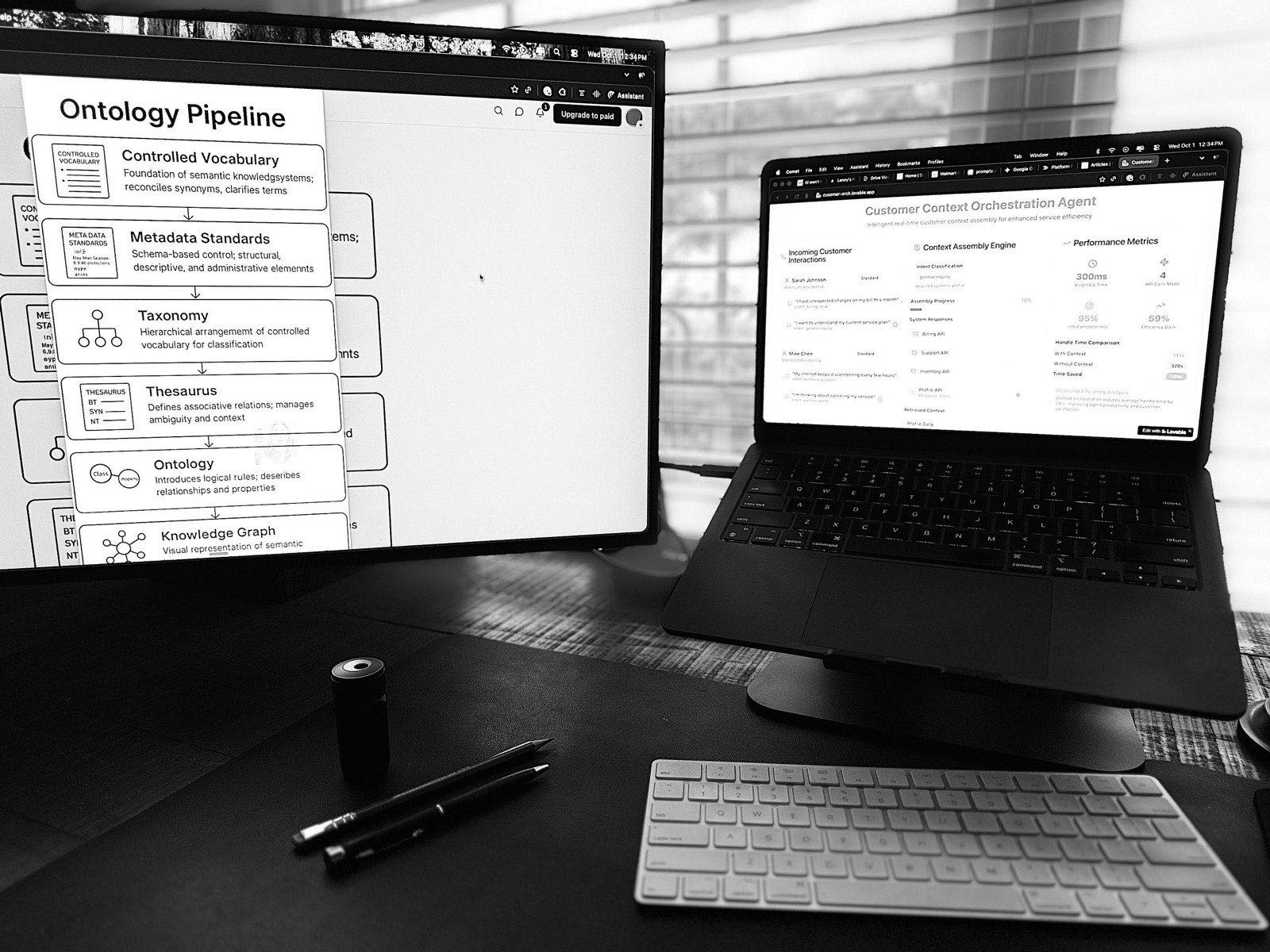

When sales, support, and billing define ‘customer’ differently, forcing consensus breaks operational context. A federated approach preserves each team’s meaning.

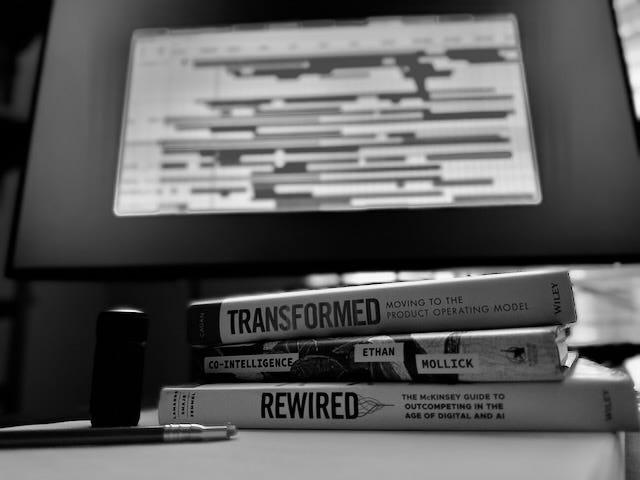

Context engineering is bigger than prompt optimization. It’s a discipline of preserving meaning across boundaries — practiced by historians, lawyers, and translators long before AI.

Most companies organize data around internal systems, not customers. Customer-based context engineering creates a unified index across systems without replacing them.

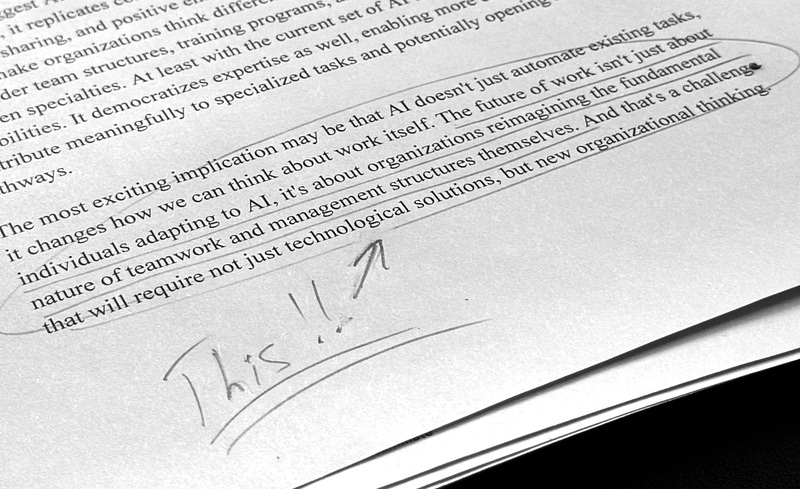

“Systems share data easily but lose meaning at every handoff. Designing for coherence — shared context across teams, tools, and time — is the real systems challenge.”

Domain expertise multiplies AI's effectiveness exponentially. Research shows experts ask better questions, spot errors, and build on AI outputs in ways novices cannot.

How you organize and present information to AI matters more than the model itself. A framework for context engineering across knowledge architecture, integration, and implementation.

The product operating model assumes digital teams can own outcomes end-to-end. In service businesses, they can’t — here’s how to structure shared accountability.

Standard product metrics fail at service companies where software supports the business, not the other way around. Measure call deflection, service efficiency, and operational outcomes instead.

Operational industries force humans to act as middleware between broken systems. AI can finally eliminate swivel-chair coordination work — if leaders redesign workflows instead of just automating them.

AI interfaces shouldn’t default to chat boxes. Teams that build platform logic and preserve context can embed intelligence where users already work.

“Your teams already have the business logic AI needs — it's just implicit. Making tacit decision-making explicit is the real challenge of AI integration.”

AI-driven velocity without purpose leads teams to ship faster while mattering less. Product leaders need a context roadmap alongside their feature roadmap.

AI won’t replace product leaders, but it transforms the role. Five practices for leading hybrid human-AI teams through uncertainty and probabilistic systems.

“AI products behave unpredictably, creating a three-way accountability relationship between teams, systems, and users. Product leaders must shift from controlling outputs to shaping system behavior.”

AI-native products behave differently than traditional software. Product leaders must move beyond features and backlogs into systems thinking and emergent behavior.

New research shows AI-augmented individuals match two-person teams while feeling more energized. Product leaders should use AI to reclaim strategic thinking time, not just automate tasks.

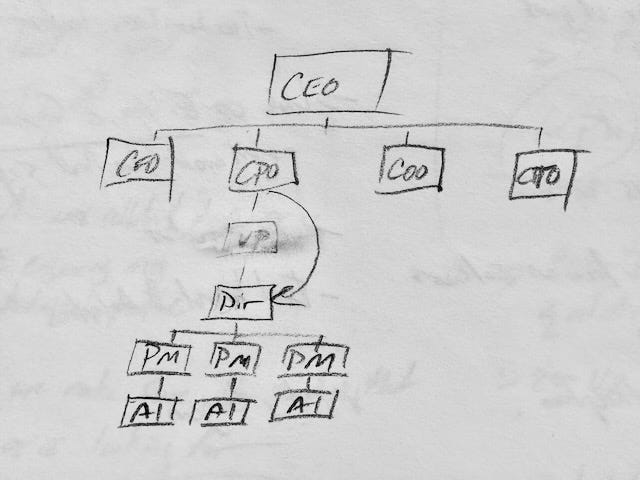

Senior product leadership roles are vanishing as AI handles coordination work. The future of product leadership is designing decision systems, not managing headcount.

AI won't fix Agile by automating ceremonies. Product leaders must choose: use AI to build Feature Factory 2.0, or redesign how teams collaborate and make decisions.

AI is permanently flattening product organizations, not just during downturns. The PM role is splitting into technical Super ICs and strategic advisors as coordination work gets automated.

AI and economic pressure are flattening product organizations. The traditional PM career ladder may not survive as smaller, AI-augmented teams replace layers of management.